Since Kettle is a Java based tool, I need JDBC drivers for each of my tools. I decided to try this in a two step process just to make things interesting. First, I will export the data from Classic Cars to a series of text files. Then I will import those text files into Access. Nothing is stopping me from going directly into the Access database, I just wanted to simulate the process that I usually encounter more often than not, which is database-to-text-to-database.

Kettle 2.5.0 comes distributed as a single zip file, and installation is as simple as extracting the zip to a given location. Not wanting to be to terrible original, I just unzipped the file into C:\Kettle. Kettle is broken up into 4 versions. I only needed 1, Spoon, which designs Transformations and Jobs. I’m only concerned with Transformations for my experiment. So I launch Spoon.

Figure 1. Kettle Start Up Screen

When starting the program, I tried to set up a repository. The repository is a database of transformation and jobs. I thought this would be useful, however, I only had Derby handy. The derby driver that came with Kettle did not want to work with my database, and copying over the Derby drivers into the C:\Kettle\libext\JDBC folder. Long story shot, the repository didn’t quite work, so I skipped it, and went with No Repository to continue on.

Now, since I use the Classic Cars database for other things that are not part of the Embedded distribution of BIRT, I have extracted the sample databases JAR file to a folder at C:\ClassicCarsDatabase. So the first step is to research what the JDBC URL will be. In my case, the JDBC URL is jdbc:derby:C:/eclipse/GWTBirt/BIRTGwt/src/CCDataBase, and the driver I will use is org.apache.derby.jdbc.EmbeddedDriver. I know these since I use them with Hibernate, so I know these will work.

So, now that I am in Spoon, I go up to File, New, and Transformation. Under the Main Tree pane, I double-click on Database Connections. Now, since I had issues with Kettles Derby driver, I have to select Generic database, which will allow me to specify a JDBC URL and driver to use. So under the Generic Tab, I use the JDBC information I mentioned above.

Figure 2. Database Connection

Now that I have specified a database connection, I can drag and drop the Classic Cars database connection over to the Transformations tab. This will automatically create a new Table Input step for me. It will also bring up the edit dialog for the Table Input step. From the dialog I click on Get SQL Statement, which brings up a graphical browser of the database schemas and tables. Having had issues with schema namespacing with Hibernate using this driver and JDBC URL in the past, I actually browse to the full Schemas.Schema Name.tables path. Now, since I am simulating a data export of the database, I need to create a straight full select statement for each table. So, starting with my Employees table, I browse to the Schemas.CLASSICMODELS.EMPLOYEES entry in the browser and double-click. When asked if I want to include the field names, I say yes. I click on the Preview button to make sure it works. Once done, I hit OK. I repeat the step for all tables under the ClassicModels schema.

Figure 3. The Table Input Editor

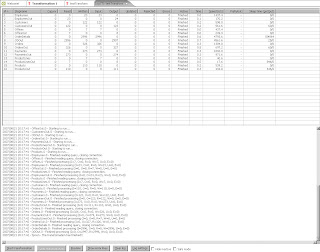

Once I have all of my input tables created, I now need to specify my output text files. So, Under the Core Objects pane on the left hand side, I select the Output drop down, and drag over the Text File Output object. Once I drag it over, I actually need to double click on the object in order to bring up the editor. But before I do, I need to link which Table Input will go with it. I want to create a text file based on Employees. So in order to create the hop, I need to hold down the left shift key, and then try to drag and drop the Employees table Input object to the text output. This will create an arrow that points from the Employees Table Input to the Text Output. Now I Double Click on the Table Output object. For the filename I specify Employees since this will represent the Employees table. I then go over to the Fields tab and click on the Get Fields button. This will take care of retrieving all the fields to be outputted. I hit OK, then repeat the same steps for all the tables. Once done, I click on the execute button to actually execute. Of course, running the processes created would be useless if they could only be run from within Spoon. Fortunately, that’s what Pan is for. Read the documentation for more information on using Pan. (Note, due to the binary field in the ProductLines tabe, I did not export that field to the text files.)

Figure 4. The Stats Screen After Running the Transformation

Going into Access is the exact opposite. However, I did run into a few issues. I needed either a JDBC driver, or to set up an ODBC connection. I went with the later since I couldn’t find a free JDBC driver for access. Once I had that, I was all set. With the transformations, I can easily script the entire process using Pan or Kitchen. The scenario I used to picture was a large data transfer of employee certificates to sell insurance. Since this list would come in as SSN, I could have used Kettle to read in the text file, run the transform to replace the SSN’s with employee ID’s, and load into a database. I think I will keep this tool in mind for the future.

1 comment:

Hi Tom,

Derby is a very recent addition to the Kettle stack. It is meant for embedded use and is IMHO not really suited to put a multi-user repository on. That being said, feel free to file a bug for the repository problem. In many occasions we find a work-around for a feature that is lacking in the various databases.

Thanks for the review,

Matt

P.S. PDI bugs are here: http://jira.pentaho.org/browse/PDI

Post a Comment