In the last article, he provides an example Perl script to demonstrate retrieving various metadata information from Word documents. I thought this would be something interesting to check out.

Since I will be running this from a Windows environment, I will need a Perl interpreter. My preference for this is ActiveStates ActivePerl. This could also be done via the Cygwin Perl package. ActivePerl is available from here. There are two install packages, one is a graphical installer, and the other is a DOS batch installer. The only difference between the two as far as I can tell is that they create different folders under the Windows Start Menu. I will show both installers.

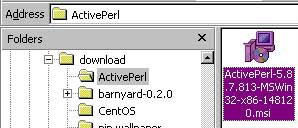

I will cover the graphical installer first, since it is the package used more often. I downloaded the ActiveState ActivePerl MSI package from their website. To run the installer I go to the location to which I downloaded it and double-click on the install file pictured below.

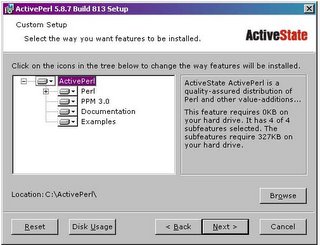

On the option screen, I select all options to be installed on the local hard drive, and set my install directory to C:\ActivePerl.

I keep all options on the next screen, and just click on next until it is complete.

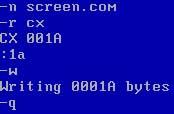

To install with the AS package, I extract the ActivePerl archive file to C:\temp\ap_install. The package comes with a DOS batch file called installer.bat for doing the install. Below is my DOS session of the install process.

C:\TEMP\ap_install\ActivePerl-5.8.7.813-MSWin32-x86-148120>dir *.bat

Volume in drive C is Local Disk

Volume Serial Number is 7BC4-13DA

Directory of C:\TEMP\ap_install\ActivePerl-5.8.7.813-MSWin32-x86-148120

06/06/2005 02:19p 15,757 Installer.bat

1 File(s) 15,757 bytes

0 Dir(s) 2,704,973,312 bytes free

C:\TEMP\ap_install\ActivePerl-5.8.7.813-MSWin32-x86-148120>installer.bat

Welcome to ActivePerl.

This installer can install ActivePerl in any location of your choice.

You do not need Administrator privileges. However, please make sure

that you have write access to this location.

Enter top level directory for install [c:\Perl]: C:\ActivePerl

The typical ActivePerl software installation requires 80 megabytes.

Please make sure enough free space is available before continuing.

ActivePerl 813 will be installed into 'C:\ActivePerl'

Proceed? [y] y

If you have a development environment (e.g. Visual Studio) that you

wish to use with Perl, you should ensure that your environment (e.g.

%LIB% and %INCLUDE%) is set before installing, for example, by running

vcvars32.bat first.

Proceed? [y]

Create shortcuts to the HTML documentation? [y]

Add the Perl\bin directory to the PATH? [y]

Create Perl file extension association? [y]

Create IIS script mapping for Perl? [y] n

Create IIS script mapping for Perl ISAPI? [y] n

Copying files...

3002 File(s) copied

Finished copying files...

Relocating...done (95 files relocated)

Configuring C:\ActivePerl\lib\Config.pm for use in C:\ActivePerl...

Configuring Perl ...

Configuring PPM for use in C:\ActivePerl...

Setting 'tempdir' set to 'C:\DOCUME~1\jward\LOCALS~1\Temp'.

If you are behind a firewall, you may need to set the following

environment variables so that PPM will operate properly:

set HTTP_proxy=http://address:port [e.g. http://192.0.0.1:8080]

set HTTP_proxy_user=username

set HTTP_proxy_pass=password

Building HTML documentation, please wait...

Thank you for installing ActivePerl!

Press return to exit.

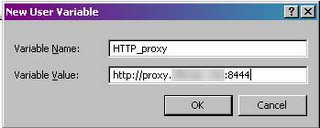

Note the line about setting up the HTTP proxy. If you are behind a firewall and are required to go through a proxy server, you will need to set this up. This is not noted in the graphical installation.

I downloaded Harlan’s script from here. The archive contains a single Perl script file titled wmd.pl. I extract the file to C:\ap_install. For testing, I will use the Word version of one of my previous articles, Sguil Reporting with Birt. I copy this file to C:\ap_install for simplicities sake. Eagerly I tried to run the script

c:\activeperl\bin\perl wmd.pl "Sguil reporting with BIRT3.doc”

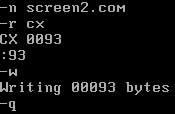

And I got garbage. I review Harlan’s blog entry, which clues me in on some additional steps I need to take. The script requires 3 packages from the Perl/Programmers Package Manager. I will need to run a series of commands from PPM to install these. The PPM is located as pictured below

Below is the session for trying to install the first package

ppm> install OLE-Storage

Error: No valid repositories:

Error: 500 Can't connect to ppm.ActiveState.com:80 (Bad hostname 'ppm.ActiveStat

e.com')

Error: 500 Can't connect to ppm.ActiveState.com:80 (Bad hostname 'ppm.ActiveStat

e.com')

ppm>

As mentioned before, I need to configure for proxy support. I have to configure an environment variable to point to my proxy. I do this from the Windows Control Panel. I go to settings, Control Panel, and double click on the System icon.

Once the System Properties Window comes up, I select the Advanced tab, then click on the Environment Variables button.

Once in the Environment Variables section, I create a new System Environment Variable by clicking on the New button

And I enter in my proxy info like so:

I exit out and rerun PPM so it can recognize the new environment variables. Below is my install session for the three additional packages (Note: I edited the results for brevity)

ppm> install OLE-Storage

====================

Install 'OLE-Storage' version 0.386 in ActivePerl 5.8.7.813.

====================

Downloaded 99928 bytes.

Extracting 40/40: blib/arch/auto/OLE/Storage/.exists

Installing C:\ActivePerl\html\bin\herbert.html

Installing C:\ActivePerl\html\bin\lclean.html

………..<edited>……………………

Successfully installed OLE-Storage version 0.386 in ActivePerl 5.8.7.813.

ppm> install Startup

====================

Install 'Startup' version 0.103 in ActivePerl 5.8.7.813.

====================

Downloaded 15618 bytes.

Extracting 9/9: blib/arch/auto/Startup/.exists

Installing C:\ActivePerl\html\bin\replace.html

………..<edited>……………………

Successfully installed Startup version 0.103 in ActivePerl 5.8.7.813.

ppm> install Unicode-Map

====================

Install 'Unicode-Map' version 0.112 in ActivePerl 5.8.7.813.

====================

Downloaded 449032 bytes.

Extracting 113/113: blib/arch/auto/Unicode/Map/Map.lib

………..<edited>……………………

Installing C:\ActivePerl\bin\mkmapfile.bat

Successfully installed Unicode-Map version 0.112 in ActivePerl 5.8.7.813.

ppm>exit

With the required packages installed, I again try Harlan’s script. (note: I prefix my command with perl this time to make sure I am running in the Perl environment).

C:\TEMP\ap_install>c:\activeperl\bin\perl wmd.pl "Sguil reporting with BIRT3.doc

"

--------------------

Statistics

--------------------

File = Sguil reporting with BIRT3.doc

Size = 507904 bytes

Magic = 0xa5ec (Word 8.0)

Version = 193

LangID = English (US)

Document has picture(s).

Document was created on Windows.

Magic Created : MS Word 97

Magic Revised : MS Word 97

--------------------

Last Author(s) Info

--------------------

1 : JWard : H:\Blog entries\REporting with BIRT\Sguil reporting with BIRT.doc

2 : JWard : H:\Blog entries\REporting with BIRT\Sguil reporting with BIRT.doc

3 : ***************: E:\Blog entries\REporting with BIRT\Sguil reporting

with BIRT.doc

4 : ***************: C:\Documents and Settings\Administrator\Application

Data\Microsoft\Word\AutoRecovery save of Sguil reporting with BIRT.asd

5 : Bonnie Taylor : C:\Documents and Settings\btaylor\Desktop\Sguil reporting wi

th BIRT.doc

6 : Bonnie Taylor : C:\Documents and Settings\btaylor\Application Data\Microsoft

\Word\AutoRecovery save of Sguil reporting with BIRT.asd

7 : Bonnie Taylor : C:\Documents and Settings\btaylor\Application Data\Microsoft

\Word\AutoRecovery save of Sguil reporting with BIRT.asd

8 : Bonnie Taylor : C:\Documents and Settings\btaylor\Application Data\Microsoft

\Word\AutoRecovery save of Sguil reporting with BIRT.asd

9 : Bonnie Taylor : C:\Documents and Settings\btaylor\Application Data\Microsoft

\Word\AutoRecovery save of Sguil reporting with BIRT.asd

10 : JWard : C:\Documents and Settings\jward\My Documents\Blog entries\REporting

with BIRT\Sguil reporting with BIRT3.doc

--------------------

Summary Information

--------------------

Title : Sguil is a great platform for IDS operations

Subject :

Authress : ***************

LastAuth : JWard

RevNum : 2

AppName : Microsoft Word 9.0

Created : 28.10.2005, 21:18:00

Last Saved : 28.10.2005, 21:18:00

Last Printed :

--------------------

Document Summary Information

--------------------

Organization : ***************

Success. The script is working correctly. I can gather a lot of information by looking at this. By looking at the history, I can see where I created the article under H:\Blog entries\ REporting with BIRT (which is on a USB Drive). I can then guess one of two things, a separate user with different drive mappings than Jward opened the document or it was opened on another machine. This is evident by entry 3 showing the document under E:\ Blog entries\REporting with BIRT\Sguil reporting with BIRT.doc, and I can tell the document was opened at least long enough for one auto-save to occur as indicated by entry 4. Bonnie Taylor, my good friend and editor, then opened the document (Where would I be without her?) from her Windows Desktop. Bonnie had the document open long enough for at least 4 auto-saves to occur showing me that she is actually reading the articles I send her :) . And the document was finally opened by Jward. The date created and last saved entries in the summary must be specific to the date that the document was actually created, since this document was restored from an email archive on this date, it was actually created on Sept. 28th, so this information would be suspect in an actual investigation.

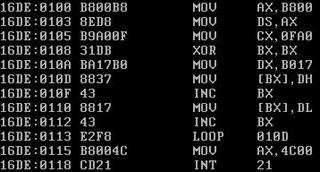

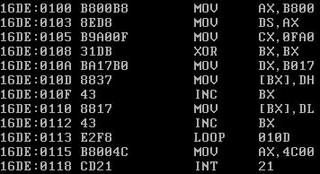

To check this script out further, I open the Word document for A Simple Program in Debug to further test the script:

C:\TEMP\ap_install>c:\activeperl\bin\perl wmd.pl "DOS Debug.doc"

--------------------

Statistics

--------------------

File = DOS Debug.doc

Size = 361472 bytes

Magic = 0xa5ec (Word 8.0)

Version = 193

LangID = English (US)

Document has picture(s).

Document was created on Windows.

Magic Created : MS Word 97

Magic Revised : MS Word 97

--------------------

Last Author(s) Info

--------------------

1 : JWard : C:\Documents and Settings\jward\My Documents\Blog entries\DEBUG\DOS

Debug.doc

2 : JWard : C:\Documents and Settings\jward\My Documents\Blog entries\DEBUG\DOS

Debug.doc

3 : JWard : C:\Documents and Settings\jward\My Documents\Blog entries\DEBUG\DOS

Debug.doc

4 : JWard : C:\Documents and Settings\jward\My Documents\Blog entries\DEBUG\DOS

Debug.doc

5 : JWard : C:\Documents and Settings\jward\My Documents\Blog entries\DEBUG\DOS

Debug.doc

6 : JWard : C:\Documents and Settings\jward\My Documents\Blog entries\DEBUG\DOS

Debug.doc

7 : JWard : C:\Documents and Settings\jward\Application Data\Microsoft\Word\Auto

Recovery save of DOS Debug.asd

8 : : E:\DEBUG\DOS Debug.doc

9 : : C:\Documents and Settings\digiassn\Application Data\Microsoft\Word\AutoR

ecovery save of DOS Debug.asd

10 : : C:\Documents and Settings\digiassn\Application Data\Microsoft\Word\Auto

Recovery save of DOS Debug.asd

--------------------

Summary Information

--------------------

Title : I got a little bored the other day and was feeling a little nosta

lgic for the days of DOS

Subject :

Authress : JWard

LastAuth :

RevNum : 63

AppName : Microsoft Word 9.0

Created : 12.10.2005, 15:06:00

Last Saved : 19.10.2005, 17:21:00

Last Printed :

--------------------

Document Summary Information

--------------------

Organization : ***********

Again, the same kind of information can be gathered by looking at the Last Authors Info. I can see Jward made numerous edits, however, only the edits at entry 6 was open long enough for the auto-save feature to save in entry 7. Entry 8 was opened by a blank user from another location (USB Key), and opened long enough for 2 auto-saves to occur. In this instance, the Created date and date last saved are both correct.

I am impressed with the output of the script. Harlan has proved one of the points I consistently try to make: many times people will downplay the usefulness of scripts because they are not compiled programs, or because they are not written in whatever the programming language of the month is. I disagree with this sentiment. While I try not to judge, I believe the utility provided by a script or a program, regardless of its base language, is a measure of its worth. Take Sguil for example. Sguil provides one of the best, if not the best, platforms for network security analysts to work from, and it is written in TCL/Tk. And I would say that Sguil is more than simply a script.

On a final note, I have to thank Harlan for his help with some small issues I had with running the script. The first document I tried was truncated from my USB key because I did not shut it down correctly when I copied the document. Since the document was corrupt, I was getting some strange errors from the script. Harlan was kind enough to work with me on finding a solution. I will keep ActivePerl around and try out some of the other scripts that Harlan has provided to the community.